Yeah bottom one is sharper but you'd be hard pressed to notice.Bottom picture looks sharper to me without my glasses on

-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Microsoft Game Stack VRS update (Series X|S) - Doom Eternal, Gears 5 and UE5 - 33% boost to Nanite Performance - cut deferred lighting time in half

- Thread starter SenjutsuSage

- Start date

Now turn for Variable Rate Compute Shaders (VRCS) on XSeries. New Talk

Variable Rate Compute Shaders on Xbox Series X|S

Microsoft Game Dev can help you find the right mix of tools and services to fit your game development needs.

developer.microsoft.com

Last edited:

onesvenus

Member

Really? So, when Microsoft says this (https://news.xbox.com/en-us/2020/06/10/everything-you-need-to-know-about-the-future-of-xbox/):When people talk about "hardware VRS" they are mainly talking about API/drivers.

your take is that they are talking about API capabilities?...the custom designed processor at the heart of Xbox Series X includes brand new innovative capabilities such as Variable Rate Shading (VRS)

Andodalf

Banned

Now turn for Variable Rate Compute Shaders (VRCS) on XSeries. New Talk

Variable Rate Compute Shaders on Xbox Series X|S

Microsoft Game Dev can help you find the right mix of tools and services to fit your game development needs.developer.microsoft.com

"A basic understanding of Variable Rate Shading is assumed."

Well a lot of people in this thread have shown not to have that!

Yes, you technically could do VRS on an xbox one too but you would get very little out of it at such a low res and probably more overhead. Compared to xbox one there are improved capabilities on the Xbox series console and aiming for higher res makes support for it more viable. There are even some better capabilities compared to PS5 but I was referring more to the "Tier 2 VRS" that people use interchangeably for "hardware VRS". hardware VRS there is just the API compatibility. PS5 can do the screen space VRS people are referring to as Tier 2 described in the link and OP video.Really? So, when Microsoft says this (https://news.xbox.com/en-us/2020/06/10/everything-you-need-to-know-about-the-future-of-xbox/):

your take is that they are talking about API capabilities?

Last edited:

onesvenus

Member

But then that wouldn't be "new innovative capabilities", would they?Yes, you technically could do VRS on an xbox one too but you would get very little out of it at such a low res and probably more overhead. Compared to xbox one there are improved capabilities on the Xbox series console and aiming for higher res makes support for it more viable. There are even some better capabilities compared to PS5 but I was referring more to the "Tier 2 VRS" that people use interchangeably for "hardware VRS". hardware VRS there is just the API compatibility. PS5 can do the screen space VRS people are referring to as Tier 2 described in the link and OP video.

I'm sorry but do you have any source about what you are saying? It seems like it's a big amount of nothing if what you say is true

Why wouldn't they be new innovative capabilities?But then that wouldn't be "new innovative capabilities", would they?

I'm sorry but do you have any source about what you are saying? It seems like it's a big amount of nothing if what you say is true

I've sent you a link already read part 3 of it. You can even compare it to the VRCS talk and see how it basically describes the exact same thing.

Last edited:

VFXVeteran

Banned

OK. You are sounding more and more like a fanboy. I'm sorry. This will be the last time I reply.

Where is the game that will have this? Point to it.I don't understand what you are referring about with pipelines. But in this discussion, I'm talking about the rendering pipeline.

And in this generation we have some of the biggest changes ever. For once, the whole geometry engine is revamped. Especially with Mesh Shaders and Amplification Shaders, replacing the whole geometry part of the rendering pipeline.

I already told you that these consoles don't have the bandwidth to implement the entire RT lighting at any reasonably clean resolution. Piecemeal RT isn't going to push visuals either (ala Spiderman). You need the entire lighting pipeline being RT.Then we have ray-tracing, that can replace or enhance several parts of the rendering pipeline. Be it shadows, reflections or global Illumination.

This has nothing to do with shading triangles that ARE seen. Got Bandwidth?One of the advantages of Mesh Shaders and even primitive Shaders is the ability to better cull unseen geometry, at an early stage. This means less resources spent on rendering invisible polygons, but also less overdraw for pixel shading. So it's a net win all around.

BANDWIDTH!!Also consider that the thing that spends most of shader work, is fragment shading and this depends mostly on render resolution. Not geometry.

What game are we talking about again? Or is this the imaginary game you see 5yrs from now?DLSS 1.9 was running on shaders, using DP4A. So will XESS.

Regardless, have you seen what TAAU can do? I have and although it's not as good as DLSS, it is still something with great results.

RT reflections is hardly "lots". LOL!The PS5 versions of Spider-man with lots of ray-tracing effects.

So is R&C now a giant leap up from last gen? I'll answer that - no. It also only has RT reflections. The fur rendering is typical of any game that has fur. SSD loading doesn't solve the rendering equation.Ratchet and Clank with great effects of ray-tracing, fur rendering, SSD for level loading.

And at 1080p for consoles. And NO ONE praises that game's visuals because it looks so ugly artistically.Metro Exodus Enhanced with real time Global Illumination.

I'll do one better. Do you know how to write a shader in a shader language? How about how the math looks for a GGX PBR model that 90% of games today use to shade their materials? You should stop being a fanboy and actually implement the things you say you know. Perhaps then you'll see how difficult it is to adhere to you and many others on this board's expectations. You guys are in for a rude awakening.But do you know what is a compiler? And why ML is important to improve performance and efficiency of compiled code?

Last edited:

winjer

Gold Member

Someone disagrees with you and that makes them a fanboy. Grow up.OK. You are sounding more and more like a fanboy. I'm sorry. This will be the last time I reply.

Besides, I'm not the one that has the tag "Playstation fanclub"

Where is the game that will have this? Point to it.

Every game that implements it.

You asked me what new features and techniques this generation has that will make a difference. Mesh and primitive shaders is one of them.

Considering that Mesh shaders are the standard in DX12U, both on PC and Xbox, and that primitive shaders are on PS5, that means most games will probably use it in the future.

I already told you that these consoles don't have the bandwidth to implement the entire RT lighting at any reasonably clean resolution. Piecemeal RT isn't going to push visuals either (ala Spiderman). You need the entire lighting pipeline being RT.

What bandwidth? It's like everything to you is bandwidth. Sorry to disappoint you, but it's not.

RT in consoles are limited primarily by shader throughput.

They don't have dedicated hardware for ray traversal. Only have acceleration to build the BVH.

This has nothing to do with shading triangles that ARE seen. Got Bandwidth?

BANDWIDTH!!

Once again, it seems to me you have no idea of what you are talking about. So when you have to try to explain any technical stuff, the only word you know is bandwidth.

And the funny thing is that RDNA2 has a tile based rendering arch. Meaning its less dependent on memory bandwidth than previous generations.

DLSS 1.9, it was Control. Before the patch for DLSS 2.0What game are we talking about again? Or is this the imaginary game you see 5yrs from now?

XESS is in development along with Intel's Alchemist. But it is already implemented and shown in Hitman 3 and The Riftbreaker. It has also been shown in UE5, and an SDK will be released soon after their cards.

TAAU can be used in every game that uses UE4.19 or later. This version of UE was released in 2018. I've used in several games already.

If you watch DF videos regularly, you will find that several games on consoles are already using TAAU.

RT reflections is hardly "lots". LOL!

Plenty of RT reflections though. Impressive upgrades, for what are essentially PS4 games.

And don't forget all the other games I pointed out.

So is R&C now a giant leap up from last gen? I'll answer that - no. It also only has RT reflections. The fur rendering is typical of any game that has fur. SSD loading doesn't solve the rendering equation.

No. Not all games that render fur have that level of detail. But please, prove me wrong and show me a game, on the PS4 that has fur rendered as well as R&C on the PS5.

And at 1080p for consoles. And NO ONE praises that game's visuals because it looks so ugly artistically.

Strangely, that when a game doesn't fit your narrative, it becomes instantly a bad game.

Metro Exodus Enhanced received lots of praise for it's RTGI implementation. I played it and was very impressed with the result.

So was a lot of gamers and Digital Foundry made a great video showcasing this tech.

I'll do one better. Do you know how to write a shader in a shader language? How about how the math looks for a GGX PBR model that 90% of games today use to shade their materials? You should stop being a fanboy and actually implement the things you say you know. Perhaps then you'll see how difficult it is to adhere to you and many others on this board's expectations. You guys are in for a rude awakening.

Once again with the fanboy insults, just because someone disagrees with you.

Fortunately you said you would no answer any more to this thread.

onesvenus

Member

If they could be done in the PS4/One generation via sw it's obvious there's nothing new about them, thus, according to you they are lying, aren't they?Why wouldn't they be new innovative capabilities?

A source about the VRS capabilities of Xbox being only software-based as you claimI've sent you a link already read part 3 of it. You can even compare it to the VRCS talk and see how it basically describes the exact same thing.

It's not them lying to you because it is a new capability. I mean when RTX cards released they talked about raytracing as a new capability on RTX cards but they could still go back and add that capability to their old GTX cards via driver update. The same with RTX Voice cancelling or SAM on AMD cards. RTX on GTX wasn't good but it was there as "hardware supported". Would adding VRS be any good for engines to use on an xbox one? Probably not, because at the end you still have your decade old bandwidth, memory and compute limits but it's possible.If they could be done in the PS4/One generation via sw it's obvious there's nothing new about them, thus, according to you they are lying, aren't they?

A source about the VRS capabilities of Xbox being only software-based as you claim

Driver/API support is not considered software-based in technical terms.

I'm not saying it's only software support. I'm saying when people refer to screen space 'Tier 2 VRS' they are talking about API support or when they say software they have written their own. So when people talk about "tier 2 hardware VRS" here they are referring to something other consoles can do via compute shaders and something the coalition actually seem to be doing via compute shaders too because they have a deferred rendering pipeline.

Last edited:

Sega Orphan

Banned

Yes, the XSX has lower precision abilities than the PS5 does, but the issue is if it will be exploited or not. Sure, MS internal studios may do it, but I am 99.9% sure no third parties are going to use it on multiplat games. It's not just flick a switch like VRS is (yes, not quite flick a switch but not far off it), but you need supercomputers that do the training etc, and they aren't going to go to all that trouble for the XSX at the expense of PS5. It's extra work, for no gain and possible lack of parity, which only causes them issues..

But do you know what is a compiler? And why ML is important to improve performance and efficiency of compiled code?

It's great that MS put it in, and I hope it gets used, but developers are really slow at adopting new tech.

01011001

Banned

Yes, the XSX has lower precision abilities than the PS5 does, but the issue is if it will be exploited or not. Sure, MS internal studios may do it, but I am 99.9% sure no third parties are going to use it on multiplat games.

Intel XeSS could mean that Multiplats will absolutely use it. it is a hardware agnostic upscaler, so why not use it when possible? especially since many devs will already implement it into their PC versions soon

Last edited:

ABrokenToy

Member

So why are we seeing the best looking games on PlayStation 5?

winjer

Gold Member

Yes, the XSX has lower precision abilities than the PS5 does, but the issue is if it will be exploited or not. Sure, MS internal studios may do it, but I am 99.9% sure no third parties are going to use it on multiplat games. It's not just flick a switch like VRS is (yes, not quite flick a switch but not far off it), but you need supercomputers that do the training etc, and they aren't going to go to all that trouble for the XSX at the expense of PS5. It's extra work, for no gain and possible lack of parity, which only causes them issues.

It's great that MS put it in, and I hope it gets used, but developers are really slow at adopting new tech.

When I was talking about ML to improve compilers, I'm not talking about a console executing ML at runtime.

I'm talking about ML improving compiled code. For example, in a CPU compiler it can reduce branch mispredictions. This would mean less stalls in the CPU execution pipeline, thus increasing CPU performance.

Last edited:

Sega Orphan

Banned

Because I would assume you will then be introducing another separate deep learning system? From how I understand it, and I may well be wrong, is that Nvidia provide the super computers to do the DLSS training, and intel would have to do the same for their new cards, which would then require MS to supply the super computers to do the training for the Series? Three different requirements and possibly giving three different results as far as quality goes. Not sure a dev goes to that degree to give the Xbox an advantage over PS5 for no pay off.Intel XeSS could mean that Multiplats will absolutely use it. it is a hardware agnostic upscaler, so why not use it when possible? especially since many devs will already implement it into their PC versions soon

Again, I could well be misunderstanding how this ML will work in practice.

onesvenus

Member

It's great that you know what other people say. Since we are talking about personal feelings, I've never seen someone talk about "hardware support" without implying some kind of hardware. Does that sound as a valid counterpoint to you?I'm saying when people refer to screen space 'Tier 2 VRS' they are talking about API support or when they say software they have written their own.

Panajev2001a

GAF's Pleasant Genius

Do we know this for sure or is it a case of “if Sony does not shout about it they must not support it” as the latter does not really pair well with how they deal with HW specs in their last few console designs (e.g.: look at the latency improvements in their controllers they said nothing about in any big presentation or PR and yet… they are there).Yes, the XSX has lower precision abilities than the PS5 does

01011001

Banned

Do we know this for sure or is it a case of “if Sony does not shout about it they must not support it” as the latter does not really pair well with how they deal with HW specs in their last few console designs (e.g.: look at the latency improvements in their controllers they said nothing about in any big presentation or PR and yet… they are there).

there was like a compatibility sheet for XeSS where the PS5 was absent. but no idea where that sheet was coming from tbh

Last edited:

winjer

Gold Member

Because I would assume you will then be introducing another separate deep learning system? From how I understand it, and I may well be wrong, is that Nvidia provide the super computers to do the DLSS training, and intel would have to do the same for their new cards, which would then require MS to supply the super computers to do the training for the Series? Three different requirements and possibly giving three different results as far as quality goes. Not sure a dev goes to that degree to give the Xbox an advantage over PS5 for no pay off.

Again, I could well be misunderstanding how this ML will work in practice.

Not really. Intel would have to train it's implementation.

But the compiled library could be used in consoles and PC alike. No need to train the AI for each platform.

Counterpoint to what, where do personal feelings come from?It's great that you know what other people say. Since we are talking about personal feelings, I've never seen someone talk about "hardware support" without implying some kind of hardware. Does that sound as a valid counterpoint to you?

I've seen plenty of people talk about Tier 2 VRS capability being some kind of hardware instead of the term for API compatibility. That's how my conversation with you started

I said:

Then in reply to that you asked:"Xbox exclusive circuitry" is a tongue in cheek reference to (Rikys) idea that "Tier 2 VRS", screen space VRS in this case, can't be done on other hardware. In reality even a PS4 using compute shaders can do it believe it or not.

Then I said when people talk about "hardware VRS" here they are mainly referring to using the driver/API but you can write your own compute shader screen space VRS, often even more effectively. Then you concentrated on a MS marketing articles use of the words:Isn't it true that Xbox has hardware VRS that's not in PCs or the PS5? I thought that was confirmed by Microsoft.

"brand new innovative capabilities such as Variable Rate Shading (VRS)"

Which I assumed was to suggest PS4/xbox one can't do VRS otherwise that blurb would be a lie. I said it's not necessarily a lie.

Where are the feelings?

If you look at The Coalitions latest video you would notice that they too even use compute shader VRS because "hardware VRS" got no gains on their deferred rendering engine.

Last edited:

Loxus

Member

Forget trying to prove a point to Riky.You quoted me and I quoted you so I'm not talking about you but to you here. You didnt use that exact circuitry phrase, others did, which you liked and you continued on with the exact same stuff:

And that's were you're trying to make incorrect claims and steer things to console wars. This thread isn't about xbox series x games it's about game optimisation results and it's about an optimisation that can be done on all hardware and multiplatform games.

"Xbox exclusive circuitry" is a tongue in cheek reference to your idea that "Tier 2 VRS", screen space VRS in this case, can't be done on other hardware. In reality even a PS4 using compute shaders can do it believe it or not.

This boost from nanite and VRS isn't a feature that PCs and PS5 are missing and the percentage gain would not be different. I even gave you an example with a GDC talk already out using a similar pipeline. You think this is about xbox series secret sauce though and you try your hardest to make tech like VRS and SFS about wars all the time. I remember you arguing about PRT+ vs SFS x2 gains back in the day but can't be bothered dig up that exact post. I just remember you went around making claims like this all the time

Things don't turn out how you expected when the actual results of games came in with VRS though and you still argue that a gap will materialise. One thing you're right about is that the conversation has run its course.

He completely ignore everything about the PS5's hardware.

He thinks you can only achieve hardware VRS with RB+ ROPs that Nvidia doesn't have. So I guess Nvidia been using software VRS all this time?

He also ignore that the PS5 Geometry Engine is customized to do Foveated Rendering.

He also ignore the gains between software VRS and Hardware VRS on a 2D screen (not VR) are small.

Riky

$MSFT

Forget trying to prove a point to Riky.

He completely ignore everything about the PS5's hardware.

He thinks you can only achieve hardware VRS with RB+ ROPs that Nvidia doesn't have. So I guess Nvidia been using software VRS all this time?

He also ignore that the PS5 Geometry Engine is customized to do Foveated Rendering.

He also ignore the gains between software VRS and Hardware VRS on a 2D screen (not VR) are small.

Talking about me again, my opinion obviously matters.

Like I said take it up with AMD, id and Digital Foundry. Their statements say it all. This thread isn't about PS5 hardware, I suggest you make one if you care so much.

Last edited:

I remember seeing that sheet too and from what I recall, it seemed fairly conclusive. Assuming it was accurate, out of all the features I've heard people crowing about I think ML is probably the one that could make the biggest visual difference in the future.there was like a compatibility sheet for XeSS where the PS5 was absent. but no idea where that sheet was coming from tbh

DaGwaphics

Member

Talking about me again, my opinion obviously matters.

Like I said take it up with AMD, id and Digital Foundry. Their statements say it all. This thread isn't about PS5 hardware, I suggest you make one if you care so much.

It's beyond the realm of comprehension for some folks that threads exist that have nothing to do with PS5, nor is the PS5 relevant to the discussion in that thread.

Is there a rundown of the second video (VRCS one) in english for the plebs? They are such long and technical videos on their own, LOL.

Loxus

Member

You do know the PS5 is confirmed to be RDNA 2, with RDNA 2 Compute Units since Road to PS5 right?Yes, the XSX has lower precision abilities than the PS5 does, but the issue is if it will be exploited or not. Sure, MS internal studios may do it, but I am 99.9% sure no third parties are going to use it on multiplat games. It's not just flick a switch like VRS is (yes, not quite flick a switch but not far off it), but you need supercomputers that do the training etc, and they aren't going to go to all that trouble for the XSX at the expense of PS5. It's extra work, for no gain and possible lack of parity, which only causes them issues.

It's great that MS put it in, and I hope it gets used, but developers are really slow at adopting new tech.

If you didn't know,

INT4 and INT8 is done via the ALU (Stream Processors) within a CU.

There is not extra hardware on the XBSX for that, INT4/8 are done by the CUs also.

Read RDNA Whitepaper for better understanding.

Loxus

Member

It isn't about PS5, yet your talking about it on the previous page?Talking about me again, my opinion obviously matters.

Like I said take it up with AMD, id and Digital Foundry. Their statements say it all. This thread isn't about PS5 hardware, I suggest you make one if you care so much.

When are you going to stop being a hypocrite and liar?

Riky

$MSFT

It isn't about PS5, yet your talking about it on the previous page?

When are you going to stop being a hypocrite and liar?

I'm not the one who brought it up, try reading the thread to enlighten yourself, your just trolling an Xbox specific thread now.

Riky

$MSFT

"When we recently interviewed David Cage, CEO and founder of Quantic Dream, he highlighted the Xbox Series X's shader cores as more suitable for machine learning tasks, which could allow the console to perform a DLSS-like performance-enhancing image reconstruction technique."

wccftech.com

wccftech.com

Jason Ronald has talked extensively about doing far more with this tech.

Xbox Series X's Advantage Could Lie in Its Machine Learning-Powered Shader Cores, Says Quantic Dream

Looking at the hardware of Xbox Series X and PS5, Quantic Dream believes Microsoft's advantage could lie in the console's ML-powered shaders.

Jason Ronald has talked extensively about doing far more with this tech.

Last edited:

Loxus

Member

I did, thinking something new about VRS was brought into the threads after seeing it had 7 pages.I'm not the one who brought it up, try reading the thread to enlighten yourself, your just trolling an Xbox specific thread now.

But it just turned out to be console warring and fanboy wet dreams (mainly by you) about nothing new.

Riky

$MSFT

I did, thinking something new about VRS was brought into the threads after seeing it had 7 pages.

But it just turned out to be console warring and fanboy wet dreams (mainly by you) about nothing new.

You've only appeared to attack people and troll, pretty sad. Added nothing to the discussion as usual.

DaGwaphics

Member

I did, thinking something new about VRS was brought into the threads after seeing it had 7 pages.

But it just turned out to be console warring and fanboy wet dreams (mainly by you) about nothing new.

The video in the OP is new and is discussing a new implementation from what they had been using? It is what it is, nothing more, nothing less.

Loxus

Member

It quite obvious why he made that statement."When we recently interviewed David Cage, CEO and founder of Quantic Dream, he highlighted the Xbox Series X's shader cores as more suitable for machine learning tasks, which could allow the console to perform a DLSS-like performance-enhancing image reconstruction technique."

Xbox Series X's Advantage Could Lie in Its Machine Learning-Powered Shader Cores, Says Quantic Dream

Looking at the hardware of Xbox Series X and PS5, Quantic Dream believes Microsoft's advantage could lie in the console's ML-powered shaders.wccftech.com

Jason Ronald has talked extensively about doing far more with this tech.

Let's enter math class.

The formula for calculating INT8 and INT4 are as follows:

INT8: No. of CUs * 512 INT8 bits per CU * clock speed MHz = TOPS

INT4: No. of CUs * 1024 INT4 bits per CU * clock speed MHz = TOPS

XBSX

INT8: 52 * 512 * 1825 = 48.58 TOPs

INT4: 52 * 1024 * 1825 = 97.17 TOPs

PS5

INT8: 36 * 512 * 2233 = 41.15 TOPs

INT4: 36 * 1024 * 2233 = 82.31 TOPs

Base on that, XBSX is more suitable for ML.

But I like how you ignore confirmed information from official places like Sony.

And let's not forget this patent.

Where one of the inventors associated with the patent have this on their LinkedIn.

Most recently I've spearheaded work in using Neural Rendering to enhance traditional rendering methods, focusing on using implicit neural representations and how to make them run efficiently. This includes creating custom high performance inference using compute shaders. The target for this work is PlayStation 5. Development done in Python, Unity, C#, compute shaders with offline training using Pytorch.

Loxus

Member

I like how you label me as attacking and troll when you always ban bait.You've only appeared to attack people and troll, pretty sad. Added nothing to the discussion as usual.

There is nothing more to add to this thread. How much more can you really add to this discussion?

All I see is back and forth arguments about nothing. I'm surprised this thread is still alive.

There isn't anything fascinating about VRS compared to RT, ML and Nanite to be 8 pages.

Riky

$MSFT

It quite obvious why he made that statement.

Let's enter math class.

The formula for calculating INT8 and INT4 are as follows:

INT8: No. of CUs * 512 INT8 bits per CU * clock speed MHz = TOPS

INT4: No. of CUs * 1024 INT4 bits per CU * clock speed MHz = TOPS

XBSX

INT8: 52 * 512 * 1825 = 48.58 TOPs

INT4: 52 * 1024 * 1825 = 97.17 TOPs

PS5

INT8: 36 * 512 * 2233 = 41.15 TOPs

INT4: 36 * 1024 * 2233 = 82.31 TOPs

Base on that, XBSX is more suitable for ML.

But I like how you ignore confirmed information from official places like Sony.

And let's not forget this patent.

Where one of the inventors associated with the patent have this on their LinkedIn.

Nah your original post is just an attack on me, sad. You're still posting irrelevant theories about PS5 now and the thread is from MS first party developers about Series consoles. It's a common tactic dribbling fanboys use, don't like anything positive about Xbox so they attack people who are happy about the news in the thread and spam irrelevant charts to derail it.

There is a stark contrast between this thread and the Cerney RT patent thread, everyone can see that.

Loxus

Member

And how about you?Nah your original post is just an attack on me, sad. You're still posting irrelevant theories about PS5 now and the thread is from MS first party developers about Series consoles. It's a common tactic dribbling fanboys use, don't like anything positive about Xbox so they attack people who are happy about the news in the thread and spam irrelevant charts to derail it.

There is a stark contrast between this thread and the Cerney RT patent thread, everyone can see that.

I'm still waiting to see these performance advantages.

onesvenus

Member

You never proved there's no hardware in Xbox to do VRS. It's your feeling that there's noneCounterpoint to what, where do personal feelings come from?

I've seen plenty of people talk about Tier 2 VRS capability being some kind of hardware instead of the term for API compatibility. That's how my conversation with you started

I said:

Then in reply to that you asked:

Then I said when people talk about "hardware VRS" here they are mainly referring to using the driver/API but you can write your own compute shader screen space VRS, often even more effectively. Then you concentrated on a MS marketing articles use of the words:

"brand new innovative capabilities such as Variable Rate Shading (VRS)"

Which I assumed was to suggest PS4/xbox one can't do VRS otherwise that blurb would be a lie. I said it's not necessarily a lie.

Where are the feelings?

If you look at The Coalitions latest video you would notice that they too even use compute shader VRS because "hardware VRS" got no gains on their deferred rendering engine.

Riky

$MSFT

"Through close collaboration and partnership between Xbox and AMD, not only have we delivered on this promise, we have gone even further introducing additional next-generation innovation such as hardware accelerated Machine Learning capabilities for better NPC intelligence, more lifelike animation, and improved visual quality via techniques such as ML powered super resolution."

A Closer Look at How Xbox Series X|S Integrates Full AMD RDNA 2 Architecture - Xbox Wire

We here at Team Xbox would like to congratulate and celebrate our amazing partners at AMD on today’s announcement of the Radeon RX 6000 Series of RDNA 2 GPUs. It was incredible to see AMD demonstrate the power and potential that the new AMD RDNA 2 architecture can deliver to gamers around the...

news.xbox.com

dcmk7

Banned

Wouldn't waste your time with someone who isn't interested in any sort of discussion.And how about you?

I'm still waiting to see these performance advantages.

The guy has been banned countless times for trolling, looks like he hasn't stopped. Sad.

Neo_game

Member

Does Doom eternal even require any vrs ? that game is as smooth as butter on any hardware. I am very pessimistic about VRS but I think they will use it or something like it in racing games and fast paced games to achieve 120fps, VR games. Probably Forza 8 will use it. For 60 or 30fps mode I would like to see more gfx effects personally. We have games like DL2 which runs better on Dx11, Elder Ring probably would also run better in Dx11. There are many games that perform better on DX11. IMO devs first need to get this right then they can think about VRS

Riky

$MSFT

Does Doom eternal even require any vrs ? that game is as smooth as butter on any hardware. I am very pessimistic about VRS but I think they will use it or something like it in racing games and fast paced games to achieve 120fps, VR games. Probably Forza 8 will use it. For 60 or 30fps mode I would like to see more gfx effects personally. We have games like DL2 which runs better on Dx11, Elder Ring probably would also run better in Dx11. There are many games that perform better on DX11. IMO devs first need to get this right then they can think about VRS

Yes Doom Eternal runs at a higher resolution in the 120fps mode due to Tier 2 VRS, id were interviewed about it.

winjer

Gold Member

It quite obvious why he made that statement.

Let's enter math class.

The formula for calculating INT8 and INT4 are as follows:

INT8: No. of CUs * 512 INT8 bits per CU * clock speed MHz = TOPS

INT4: No. of CUs * 1024 INT4 bits per CU * clock speed MHz = TOPS

XBSX

INT8: 52 * 512 * 1825 = 48.58 TOPs

INT4: 52 * 1024 * 1825 = 97.17 TOPs

PS5

INT8: 36 * 512 * 2233 = 41.15 TOPs

INT4: 36 * 1024 * 2233 = 82.31 TOPs

Base on that, XBSX is more suitable for ML.

But I like how you ignore confirmed information from official places like Sony.

And let's not forget this patent.

Where one of the inventors associated with the patent have this on their LinkedIn.

ML is not just about granularity for FP and Int execution. It´s about processing vector accumulations in a Matrix.

For that it's necessary support for DP4A or Tensor units. And this is the thing that lacks in the PS5.

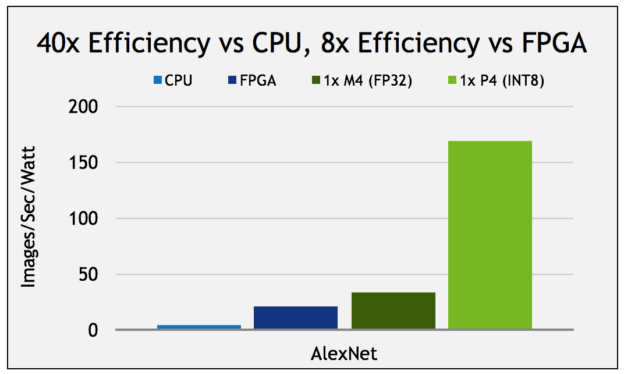

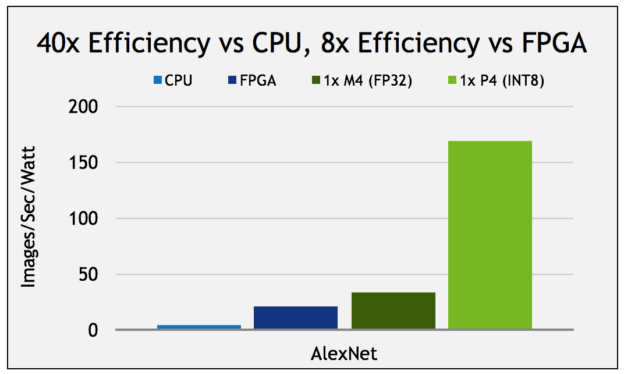

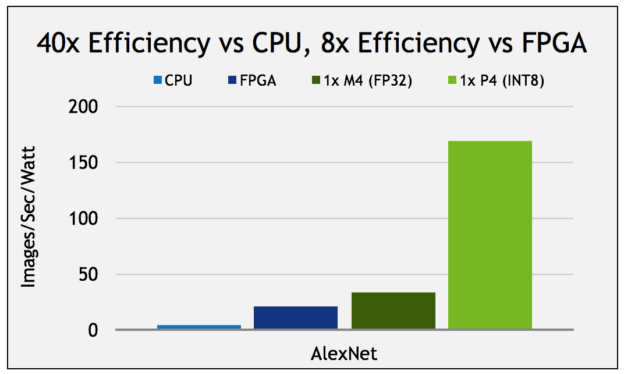

This is an example of the level of performance you get using DP4A and a regular GPU.

The M4 is a Maxwell based GPU, for the Tesla range of cards. P4 is Pascal with DP4A.

What does 'no hardware in xbox' even mean here? Where have I said 'there is no hardware in xbox', whatever that even means here.You never proved there's no hardware in Xbox to do VRS. It's your feeling that there's none

I've only been talking about the capability of the machines to do VRS.

There is no hardware dedicated to VRS if that's what you're asking.

Last edited:

Loxus

Member

Why are you even talking about Nvidia?ML is not just about granularity for FP and Int execution. It´s about processing vector accumulations in a Matrix.

For that it's necessary support for DP4A or Tensor units. And this is the thing that lacks in the PS5.

This is an example of the level of performance you get using DP4A and a regular GPU.

The M4 is a Maxwell based GPU, for the Tesla range of cards. P4 is Pascal with DP4A.

And it would also apply to the Xbox Series Consoles and AMD RDNA GPUs, not just PS5.

You should take some time out of your day and read AMD RDNA Whitepaper.

winjer

Gold Member

Why are you even talking about Nvidia?

And it would also apply to the Xbox Series Consoles and AMD RDNA GPUs, not just PS5.

You should take some time out of your day and read AMD RDNA Whitepaper.

Because nVidia was the first one to implement DP4A in their GPUs. Back in 2016.

And they wrote papers about it, as well as benchmarks.

AMD is NOW doing the same with RDNA2. At least on PC and Series S/X

Loxus

Member

I like how you still refuse to believe the PS5 doesn't have that capability.Because nVidia was the first one to implement DP4A in their GPUs. Back in 2016.

And they wrote papers about it, as well as benchmarks.

AMD is NOW doing the same with RDNA2. At least on PC and Series S/X

Even though it's confirmed to be RDNA 2.

Even RDNA 1 have this capability.

There is no official information that says the PS5 isn't RDNA 2, but yet here we are with over a year after release and people still think the PS5 is RDNA 1.

I wouldn't be replying to you anymore on this ML topic in this thread as I wish to not derail this thread any further. We can continue this in a ML specific thread if you wish.

It's worth pointing out that AMD did have 'rapid packed math' for a while (PS4 Pro at least) so it wouldn't be the same as the M4 FP32 efficiency even on old AMD cards. nvidia Pascal offers more though.ML is not just about granularity for FP and Int execution. It´s about processing vector accumulations in a Matrix.

For that it's necessary support for DP4A or Tensor units. And this is the thing that lacks in the PS5.

This is an example of the level of performance you get using DP4A and a regular GPU.

The M4 is a Maxwell based GPU, for the Tesla range of cards. P4 is Pascal with DP4A.

Last edited:

winjer

Gold Member

I like how you still refuse to believe the PS5 doesn't have that capability.

Even though it's confirmed to be RDNA 2.

Even RDNA 1 have this capability.

There is no official information that says the PS5 isn't RDNA 2, but yet here we are with over a year after release and people still think the PS5 is RDNA 1.

I wouldn't be replying to you anymore on this ML topic in this thread as I wish to not derail this thread any further. We can continue this in a ML specific thread if you wish.

Because a few devs have already stated or inferred, that the PS5 is not as good as the Series S/X and PC, at ML.

Also, reports on skews for Rdeon chips seem to confirm that the PS5 does not support DP4A.

You keep insisting that the PS5 has the full RDNA2 feature set, despite having been proven wrong a few times.

BTW, weren´t you banned from the beyond3d forum?

Loxus

Member

I was never on beyond3d and I was never banned here or warned for console warring / trolling.Because a few devs have already stated or inferred, that the PS5 is not as good as the Series S/X and PC, at ML.

Also, reports on skews for Rdeon chips seem to confirm that the PS5 does not support DP4A.

You keep insisting that the PS5 has the full RDNA2 feature set, despite having been proven wrong a few times.

BTW, weren´t you banned from the beyond3d forum?

Are these Devs AMD or Sony?

The Devs didn't even mention INT8/4 or say the PS5 doesn't have the capability.

And how was I proven wrong?

Are you saying Mark Cerny is a liar?

Check this out.

Even with RDNA Display Engine, Media Engine, Rasterizer and ROPs, Renoir is still considered to be Vega.

You know why?

Because it has Vega Compute Units.

PS5 is confirmed to have RDNA 2 Compute Units.

But keep believing what you want.

Last edited: