rnlval

Member

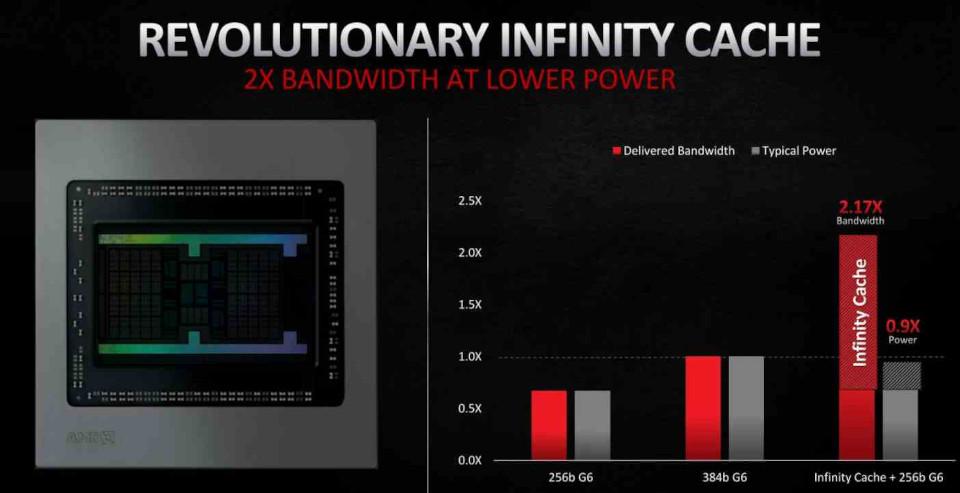

PC AIB GPU vendors are acting like Sony or MS position when it comes to out-of-the-box GPU's clock speed profiles. AMD and NVIDIA can advise clock speed profiles but the PC GPU clock speed profile setting comes from PC AIB GPU vendors. EVGA is responsible when they apply FTW ("For-The-Win") super overclocks their GPU cards and causes abnormal product failures.YOU'RE. Not YOUR. Keep that in mind next time you call someone a dumb fuck.

The fact that you dont understand why I am bringing up overclocking just shows how utterly clueless you are about how tflops and clocks correlate.

This right here is asinine. You are spitting in the face of over a decade of computer graphics to spout nonsense that has no basis in reality. Every PC GPU can already out perform its theoretical tflops. It can go beyond its theoretical clock limits. We saw this in the video you continue to ignore because it shows the 5700xt hitting well above the 1.91 Ghz clockspeeds AMD themselves used to calculate the card's theoretical tflops number. 9.75.

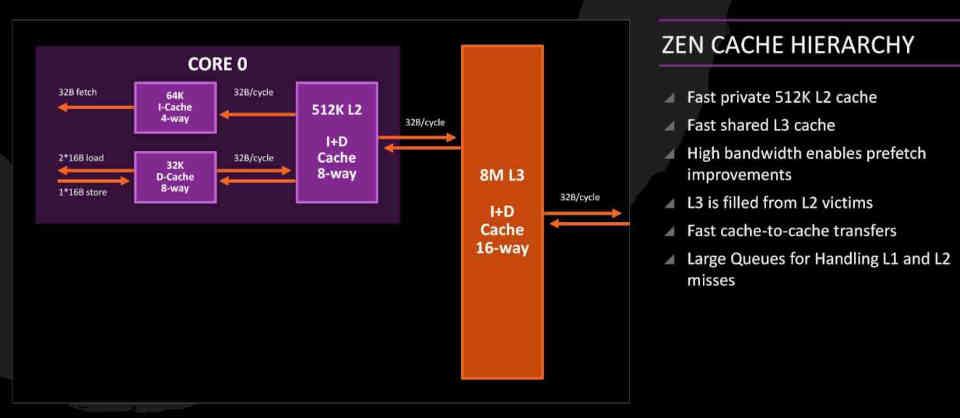

40 CUs * 64 Shader processors * 2 * 1.91 Ghz = 9.75

For the 6600xt they used the clocks max at 2.589 Ghz to calculate the card's theoretical tflops.

32 CUs * 64 Shader cores * 2 * 2.589 Ghz = 10.6 Tflops

Notice how both cards are able to hit higher clocks in most games which means they are operating beyond their theoretical maximums. Something you said that never happens because cards never even hit their theoritical maximum.

Here is horizon running the game at 2.79 Ghz and the 5700xt runs it at 2.153 Ghz. Both 200 mhz BEYOND the card's theoretical maximum limit AMD themselves advertised.

Your theory about cards not hitting max tflops is WRONG on every level. The only way the card will not be fully utilized is if they are capped at 30 fps or 60 fps and the dev is content with leaving a lot of performance on the table. But we have seen every game drop frames this gen and nearly every game utilize DRS which literally drops the resolution to allow the GPU to operate at full capacity so as to not leave performance on the table.

I have a UPS (Uninterruptible Power Supply) that lets me view the power consumption of my PS5, TV or PC at any given moment. I can easily see which games fully max out the APU and which ones dont. BC games without PS5 patches top out at 100w. These are your Uncharted 4's running at PS4 Pro clocks. Then you have games like Horizon which are patched to utilize higher PS5 clocks and they consume a bit more. Then you have games like Doom Eternal running on PS5 SDK fully utilizing the console and i can see the power consumption at 205-211 watts consistently. Same thing DF reported when they ran Gears 5's XSX native port. It was up to 211-220w at times. Whats consuming all that power if not the goddamn GPU running at its max clocks?

This lines up with whatever happens on my PC. When I run Hades at native 4k 120 fps, my GPU utilization sits at roughly 40%. If i leave the framerate uncapped, it goes up to 99% and runs the game at 350 fps. Games are designed to automatically scale up. its been this way for well over a decade since modern GPUs arrived in the mid 2000s. If they didnt scale, you would not see GoW and the Last guardian automatically hit 60 fps on the PS5 without any patches. If they didnt scale with CUs, you would not see Far Cry 6 have a consistent resolution advantage on the XSX. Same goes for Doom Eternal. These games run well because modern GPUs are able to utilize not just clocks but all the shader cores.

For example

MSI RTX 2080 Ti GX Trio is faster than RTX Titan XP reference.

MSI RTX 3080 Ti GX Trio is faster than RTX 3090 reference.