See this confuses me a lot. I was under the impression that AMD had stated the 7 series would be DX 12 ready. Now I don't know anymore.

It is....12.0 that is

See this confuses me a lot. I was under the impression that AMD had stated the 7 series would be DX 12 ready. Now I don't know anymore.

See this confuses me a lot. I was under the impression that AMD had stated the 7 series would be DX 12 ready. Now I don't know anymore.

DX releases are often a feature year for GPU manufacturers (ie, expensive rebadges for little effort), i'm not expecting any cards released in 2015 to be fully DX12 compatible.

No, 750Ti does not. All other Maxwell cards, 960 and up, do, though.So if I'm in the market for a GPU, how do I know which current cards will make use of Direct X 12.1?

I take it a 750ti does not?

So if Nvidia is confirming support for DX_12_1 on 970,980 why is it their site updated the titan x page with support for it when 980ti was announced but didnt /haven't yet done so for 970 or 980. Something is wrong here.

http://www.geforce.co.uk/hardware/desktop-gpus/geforce-gtx-970/specifications

http://www.geforce.co.uk/hardware/desktop-gpus/geforce-gtx-titan-x/specifications

No, 750Ti does not. All other Maxwell cards, 960 and up, do, though.

It's probably not any major deal, though. I certainly wouldn't go judging which GPU I got over this, unless all else was equal.

I thought it was confirmed that R9 cards like the 280X would support DX12...I guess not, sigh.

What I don't get is what they mean by only *some* GCN cards supporting 12.0. Is that not base DX12 support? Or is there another, more basic level, with 12.0 being the first tiered 'feature level'?

If you're asking specifically, I wouldn't recommend the GTX960. If you're just asking generally, then it would depend on your budget and desires/expectations.If I'm doing a build though, I guess it's worth going for the GTX960 over the 750ti?

I don't know what GCN is. GCN is the acronym used to signify the GameCube. I find it hilarious that all theses graphics cards are based on the GameCube.

If you're asking specifically, I wouldn't recommend the GTX960. If you're just asking generally, then it would depend on your budget and desires/expectations.

Maybe because the 12_1 only got fully finalised after the fact? Just a hunch.

All of the features specified in 12_1 are in the docs detailing the second gen Maxwell architecture so GM200(Titan X, 980Ti), GM204(970, 980), and GM206(960) are 12_1 feature set level.

You should just assume they haven't updated their store page.

AMD's global head of technical marketing Robert Hallock confirmed to Computerbase GCN-based graphics cards will support DX12 and at least some will also support DX12 feature level 12_0. Computerbase mentions Tonga, Hawaii and the Xbox One GPU will surely support 12_0, however question marks remain for older chipsets like Bonaire, Tahiti and Pitcairn.

Meanwhile, Nvidia confirmed earlier their chipsets GM200, GM204 and GM206 will already support DX12 feature level 12_1. At GDC this year, MS gave an overview of feature level 12_0 and 12_1:

Hallock said it shouldn't be an issue that AMD cards only support feature level 12_0 since all the features relevant for games are included in 11_1 and 12_0. Also, most games will stick closely to what the consoles use and will therefore mostly use 12_0. Keep in mind, though, he's the global head of technical marketing, so his words shouldn't just be taken as gospel. Computerbase also pointed out Nvidia said the same thing when their Kepler cards didn't support feature level 11_1.

tl;dr: If you want a fully compatible DX12 card you should look into getting a Nvidia GTX 960, 970, 980, 980 Ti or Titan X. Or wait until more information regarding AMD's upcoming Fiji card(s) are released.

PS4 doesn't use DirectX.PS4? It probably does not have full feature level 12_1, but it would be interesting how they would place it.

I don't know what GCN is. GCN is the acronym used to signify the GameCube. I find it hilarious that all theses graphics cards are based on the GameCube.

It won't have the hardware changes to theoretically support 12_1.PS4? It probably does not have full feature level 12_1, but it would be interesting how they would place it.

My question is, whats the difference between 12_0 and 11_1?

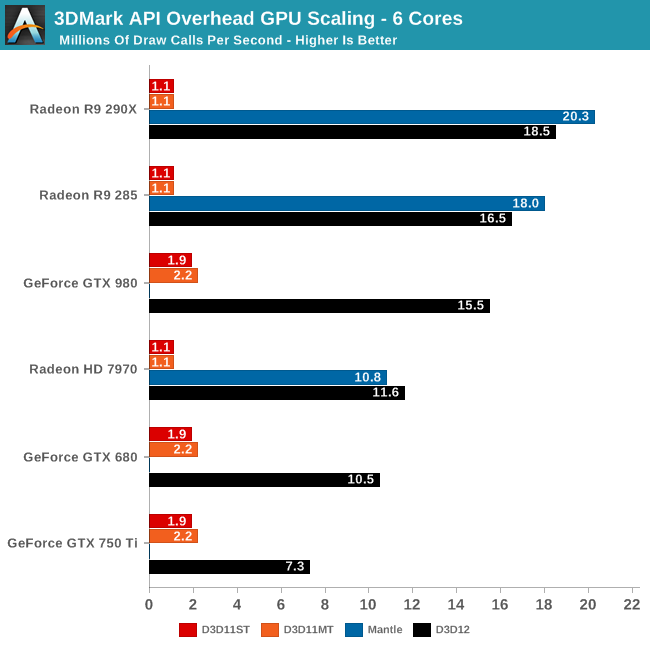

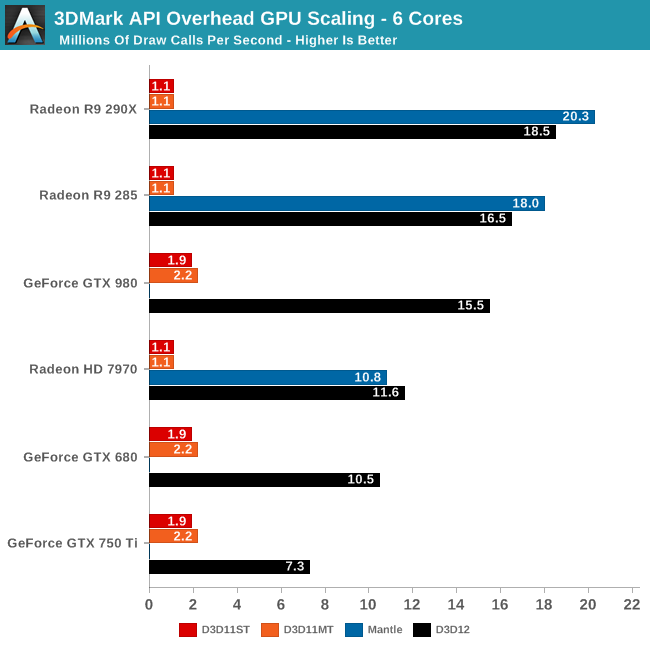

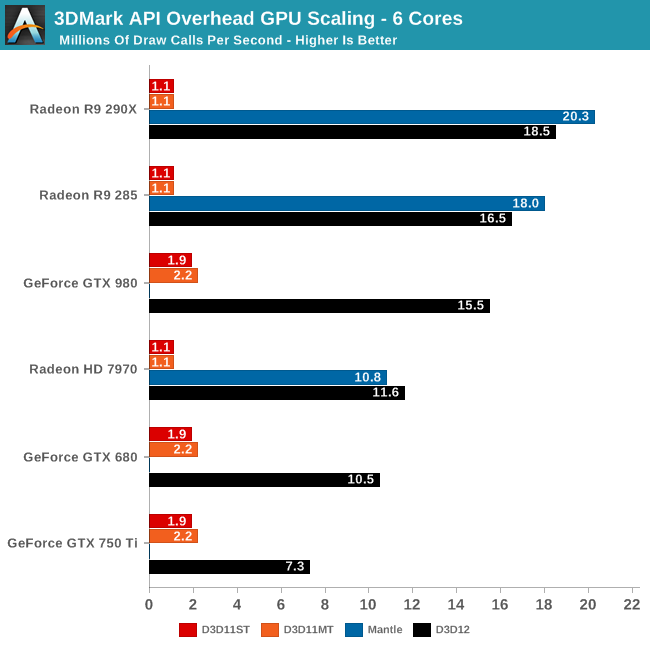

Ah, so it's literally just the CPU optimisations?I'm pretty sure it was CPU optimization for draw calls. DX11 couldn't use more than one core very efficiently making it scale poorly on more than four threads.

Regarding GCN 1.0 not getting DX12:

Ah, so it's literally just the CPU optimisations?

So all the stuff around command list/bundles and the descriptor heaps and tables are just 12_0 and 12_1?

From my understanding, 12_0 had the optimisations above, but 12_1 had hardware changes which added more hardware based optimisations for voxels, and enabling real-time GI?I have no idea why 12.0 and 12 would be different, but to my understanding 12 doesn't have any new features. The CPU optimization is pretty big though as that has held back a lot of bigger games and means you can save something on the CPU by not needing a K series i5.

Wait and see what AMD do. If they do a rebadged 290 as a 380 and price it around $200, that is going to be much preferable to a GTX960. It'll probably be more like $250, but you never know.Well, specifically. I'm trying to stay under $200.

I'm glad I wasn't the only one.I thought this was about Gamecube and I was confused.

Microsoft did not build the GPU.....Yea.... I doubt it. DX is a Microsoft product, I am sure when they built the Xbox One, they also knew what would be required for DX12.1. I mean, these guys are not amateurs, they are Microsoft.

Microsoft did not build the GPU.....

The company doesn't revolve around Xbox, either.

There is no DX12.1, there is only DX12 and its Feature-Levels 11_0, 11_1, 12_0 and 12_1.

The consoles are using GCN Gen 2 / GCN 1.1 / IP v7 / Sea Islands, you name it.

FL12_0 support is the highest (from DX12 perspective) .

For FL12_1 one need serious hardware modifications, for what the console technology is too old.

But they did customize it while knowing that DX12 was in the pipe line and what would be required surely?

Microsoft did not build the GPU.....

The company doesn't revolve around Xbox, either.

It's of course very unlikely that FL12_1 will be a prominent requirement.But when developers start to adopt DX12, will they even care about 12_1 since they can target way, way more GPUs by sticking to 12_0?

Didn't we already go through this with DX 10.1 and 11.1? How many games even required you to have a GPU with those feature sets, I can't recall many. Why should we believe this is going to be a different scenario, despite that DX 12_0 and 12_1 are becoming available at the same time and not spaced apart like the previous feature levels.

If the XB1 is also 12_0 only then it gives devs even less incentive to target 12_1 even for ports.

I'm making certain that my next GPU supports 12_1 but I'm wondering if it will even matter.

Found this in an an article: http://www.anandtech.com/show/8544/microsoft-details-direct3d-113-12-new-features

(There are the two features included in level 12_1 that I've found)

It's of course very unlikely that FL12_1 will be a prominent requirement.

I only see very few Software-Vendors/Games using it for PC exklusive features.

I assume this will be more a job tackled by the various Hardware Vendors with small middleware like VXGI from Nvidia which uses Tiled Resources Tier 3 and Conservative Rasterization for a Global Illumination solution.

Except they designed the features. AMD just provided the base hardware and manufacturing. It is like how Apple designs their CPU's but Samsung manufactures them.

There's no way in hell Microsoft did not anticipate and design the Xbox One accordingly.

For free you won't get it, but vastly cheaper than before with ROVs.I am more interested in having fast, one day free again, Order Independent Transparency... The DreamCast's GPU was doing it (HW translucency sorting, basically the same end result) in HW years ago...

My question is, whats the difference between 12_0 and 11_1?

I'm pretty sure it was CPU optimization for draw calls. DX11 couldn't use more than one core very efficiently making it scale poorly on more than four threads.

Regarding GCN 1.0 not getting DX12:

The GPU design/technology is the same, sold from AMD to both of them.So MS ended up designing a GPU that's JUST like the GPU Sony had designed for them by AMD and it performs better.

Weird. It's like AMD sold Sony MS's GPU design, they should totally sue or something!

Microsoft did not build the GPU's, though. If AMD simply didn't have the capability for it, then there's nothing else to do. Microsoft aren't going to limit the development of DX12 just because there's a few things the Xbox couldn't do. Like I said, the company doesn't revolve around the Xbox.Except they designed the features. AMD just provided the base hardware and manufacturing. It is like how Apple designs their CPU's but Samsung manufactures them.

There's no way in hell Microsoft did not anticipate and design the Xbox One accordingly.

So MS ended up designing a GPU that's JUST like the GPU Sony had designed for them by AMD and it performs better.

Weird. It's like AMD sold Sony MS's GPU design, they should totally sue or something!

Microsoft did not build the GPU's, though. If AMD simply didn't have the capability for it, then there's nothing else to do. Microsoft aren't going to limit the development of DX12 just because there's a few things the Xbox couldn't do. Like I said, the company doesn't revolve around the Xbox.

Isn't the XB1 gpu based on Pitcairn.

Are the difference between those two large enough to include DX12 support?

Let me clarify. AMD provided the base hardware, let's assume an HD 7970 GPU. Microsoft took that core product, and added some features, removed some features, and redesigned some features. It is now a heavily modified HD7970 that is in most ways better than the original 7970, but it may also miss features, that Microsoft thought the Xbox One didn't need, and making it worse than 7970 in those aspects.

Sony did the same thing with the PS4. They took that base GPU and heavily modified it. Just because the core is the same as the Xbox One GPU does not mean they are both the same in every single way. Some features PS4 clearly has advantage, like the extra ROP's and all that other stuff. Xbox One version on the other hand has a higher clock, because maybe Microsoft modified in some way, ie. the cooling process, to make it run cooler at the same temperature. AMD then just manufactures the GPU given the specifications by Microsoft/Sony.

The same process then repeats with the CPU. Microsoft and Sony took that base Jaguar CPU and they each modified it to their liking. Of course with a CPU there is less headroom for customization, but some things can be modified. As an example, the Xbox One Jaguar runs at a higher clock speed, and again this is probably tied to the cooling design Microsoft has for the rest of the system.

Some of the hardware, such as the RAM, is just base hardware, and not modified. The same can be said for HDD, but these are probably tested more thoroughly to ensure it lasts an entire generation.

Is this clearer?

no not really. it's more likely ms and sony provided specifications of what they wanted thier SoC to be capeable of and then AMD designed them.