ethomaz

Banned

Probably.Is this implying that the negative impact of using a hypervisor has been effectively removed by their page descriptor cache?

Needs to know if it was the same case for XB1 but I guess the % increase is over XB1.

Probably.Is this implying that the negative impact of using a hypervisor has been effectively removed by their page descriptor cache?

Hypervisor is nothing unique or bad. The PS4 uses a Hypervisor as well.

Probably.

Needs to know if it was the same case for XB1.

That is really good... they can take more from the hardware.According to the DF specs reveal, it wasn't, this is new for the xbonex cpu.

285 GB/s just for the GPU is really good.

I remember reading months back about some new encoding method/codec that would allow for great quality but still be reasonable in size.

Has there been any word on whether the HDMI passthrough supports 4k/HDR?

Seems to negate the crossbar argument Ian Cutress at Anandtech put forth that posited that a ROP mismatch suggested a crossbar that would limit peak bandwidth to 218 gb/s

HDMI in support only version 1.4!

Looks like they doubled the number of ACEs compared with XB1... it is still half of PS4/Pro.

I think the Pro had people running wild enough with 2 gpus on a console, spare em

Well to be fair, the article said it was just a conjecture.

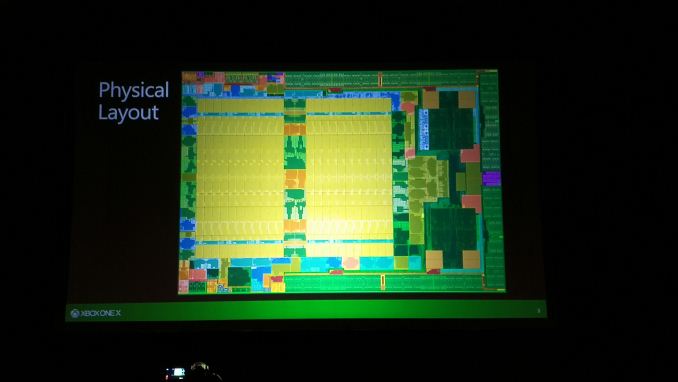

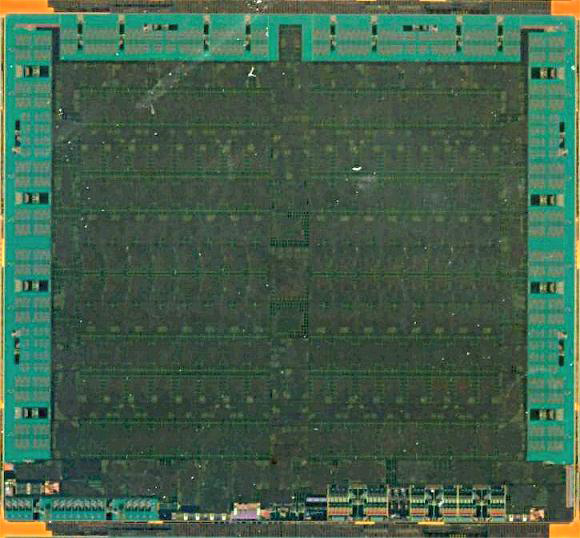

We still haven't seen the PS4 Pro die but I expect it to be the same way with 2 GPU arrays next to each other just like this.

PS4/Pro has 8 compute processors.GCP is likely HWS in AMD's terminology meaning that it's able to handle two queues of any kind (graphics is the most universal context in DX). This means that they went from 4+2 to 4+4 max for compute queues which is essentially the same as what PS4/Pro has.

It also makes sense from a utilization point of view as with GCN shader core getting wider you want more contexts in flight to be able to hide these execution bubbles.

PS4/Pro has 8 compute processors.

XB1 has 2 compute processors.

XB1X has 4 compute processors.

That is what AMD call ACE.

If you add the graphic (or command processor) you will have: 8x2, 2x2 and 4x2 respectively.

It is doubled from XB1 but half PS4/Pro.

Depends how much devs use asynchronous compute in their game.How is this likely to affect graphics performance in comparisons between PS4 / PS4 Pro and XB1X?

So the CPU gains are:

- 30% more clock (likely the most of the new performance gains)

- up to 20% less latency (but no info on how that translates into real world performance)

- Code translation for the virtualized environment runs 4.5+% faster than the regular xbone, apparently that practically negates the virtualization costs.

Ppl don't know what the hell they are looking at here lolThis is the most complete information drop we have received for Xbox One X I can't believe this thread isn't 10 pages by now.

Actually the PS4(Pro) has two MECs (Micro Engine Compute) where each one handles up to 32 compute queues.PS4/Pro has 8 compute processors.

XB1 has 2 compute processors.

XB1X has 4 compute processors.

That is what AMD call ACE.

If you add the graphic (or command processor) you will have: 8x2, 2x2 and 4x2 respectively.

It is doubled from XB1 but half PS4/Pro.

https://www.phoronix.com/forums/forum/linux-graphics-x-org-drivers/open-source-amd-linux/856534-amdgpu-questions?p=857850#post857850There are two different "command processor" blocks in CI and above:

- "ME" (Micro Engine, aka the graphics command processor, called CP on pre-CI when it was the only command processor in the chip)

- "MEC" (Micro Engine Compute, aka the compute command processor)

Some chips have two MEC's, other parts have only one. So far one MEC (up to 32 queues) seems to be more than enough to keep the shader core fully occupied.

The MEC block has 4 independent threads, referred to as "pipes" in engineering and "ACEs" (Asynchronous Compute Engines) in marketing. One MEC => 4 ACEs, two MECs => 8 ACEs. Each pipe can manage 8 compute queues, or one of the pipes can run HW scheduler microcode which assigns "virtual" queues to queues on the other 3/7 pipes.

Like zero.How is this likely to affect graphics performance in comparisons between PS4 / PS4 Pro and XB1X?

PS4/Pro has 8 compute processors.

XB1 has 2 compute processors.

XB1X has 4 compute processors.

That is what AMD call ACE.

If you add the graphic (or command processor) you will have: 8x2, 2x2 and 4x2 respectively.

It is doubled from XB1 but half PS4/Pro.

You can't just compare different terminology like this. XBO has two compute command processors but two graphics command processors as well and as far as we know these "GCP" can be what AMD calls HWS and these are able to handle two submission queues each. Generally speaking, a "graphics command processor" should be able to submit anything since you can submit compute inside graphics context in DX but not vice versa.

So you likely have 8 vs 6 vs 8 for pure compute contexts.

Ppl don't know what the hell they are looking at here lol

I know nothing about this so I'm going to ask some simple Q's.So the CPU gains are:

- 30% more clock (likely the most of the new performance gains)

- up to 20% less latency (but no info on how that translates into real world performance)

- Code translation for the virtualized environment runs 4.5+% faster than the regular xbone, apparently that practically negates the virtualization costs.

Cache misses are quite costly for a CPU, so this should give nice boost.- up to 20% less latency (but no info on how that translates into real world performance)

Cache misses are quite costly for a CPU, so this should give nice boost.

Looks like they doubled the number of ACEs compared with XB1... it is still half of PS4/Pro.

That's a point of interest, but keep in mind that unlike an ROP or TMU or something, twice the number doesn't indicate twice the compute capability. More ACEs mean more efficient scheduling, but don't increase the total compute capability of the chip.

Say for instance 4 ACEs meant 80% efficiency, 8 meant 90%, while the former had a compute performance of 10 and the latter had a compute performance of 6. Totally pulled out my ass, but to get the idea.

Speaking of ROPs isn't it most likely that PS4 Pro has 64 ROPs because it has to have 32 ROPs when half of the GPU is working in PS4 mode?

I don't believe it has any change ROPs count... it is not needed too.It's a bit of a bother that 2013 "give the specs to the people" sony is now mum on the ROP count though.

I don't believe it has any change ROPs count... it is not needed too.

It could be like Polaris 10, which has 8 ROPs per shader engine, which would mean it has 32.

Since the Pro seems to mostly target halfway to 4K/2x 1080p before checkerboarding, the pixel throughput would make sense. PS4 was overkill on ROPs for 1080p. 64 ROPs for 2K would also be overkill.

It's a bit of a bother that 2013 "give the specs to the people" sony is now mum on the ROP count though. 32 seems most likely from what I see though.

I don't believe it has any change ROPs count... it is not needed too.

I guess there's that, no change = no republish. If they doubled the ROPs I think they would have said it.

It can't be 8 per SE because that would drop PS4 Pro ROPs to 16 when in PS4 mode.

h.265? On average it allows for the same video quality as the previous h.264 while having ~33%-48% less bit rate.I remember reading months back about some new encoding method/codec that would allow for great quality but still be reasonable in size.

I don't believe PS4 Mode disable ROPs... it is only allocate half of the CUs to work.It can't be 8 per SE because that would drop PS4 Pro ROPs to 16 when in PS4 mode.

Microsoft Describes Next Xbox SoC

Scorpio picks 12GB GDDR5 over HBM memory

The single large block of memory enables much simpler tools for application developers, Sell said. It also emulates the embedded SRAM for compatibility with apps written for the prior console.

Microsoft kicked the tires of the kinds of HBM modules AMD pioneered in GPUs in 2015 and Nvidia uses now on Volta. ”But for a consumer product HBM2 is too expensive and inflexible...its memory bandwidth is not as granular, and we would be locked into [an HBM] module," Sell said.

It's too early to tell what the next generation trade-offs will look like, but both a Jedec GDDR6 and an HBM3 are in the works. A major cost issue for HBM is a lack of test coverage and thus relatively low yields, he said.

I mean, with all the talk of Microsoft not doing console generations anymore, etc it's good to know they're looking into HBM3 and GDDR6 for future Xbox consoles.

Why is there a DP1.2a path between SB and SoC? Or is this their way of saying that the content from BD is HDCP protected?

Perhaps that is what they move the HDMI-in through?

I thought it was supposed to support HDMI 2.1. Does 2.0b support VRR?

So Scorpio will only use a SATA2 interface for the HDD. BUT: SATA2 is completely fine - I expect most savings coming from the CPU decompressing data when looking at load times.

Ha am only sightly above dumbos atm in time though I will be MLG ^_^.Now that's a summation I can understand and get behind!

You should work at DF, interpretating the news for dumbos like me

Thanks for that was able to hunt down better imagesi don't know if it has been posted yet but apparently one slide is missing:

So Scorpio will only use a SATA2 interface for the HDD. BUT: SATA2 is completely fine - I expect most savings coming from the CPU decompressing data when looking at load times.

Only thing I found so far is pretty picture from a german site. Nothing on hotchips that I can see.Still SATA2? Why MS, why?

P.S. Have they released a full slide deck. Has anybody a link to it?

Still SATA2? Why MS, why?

P.S. Have they released a full slide deck. Has anybody a link to it?